本篇用于备考大二下的算法设计课程,其中会总结已学到的算法,每个算法会给出思想和伪代码,对于复杂的算法会做出详尽的解释。

Introduction

设计算法的5个要求

- Finiteness。terminates after a finite number of steps

- Definiteness。rigorously and unambiguously specified

- Input。valid inputs are clearly specified

- Output。can be proved to produce the correct output given a valid input

- Effectiveness。steps are sufficiently simple and basic

GCD problem

GCD是greatest common divisor的简写,也就是找到两个数的最大公约数。

Euclid’s algorithm

欧几里得算法基于以下的公式实现:gcd(m,n) = gcd(n, m mod n)

//Input:int m,int n

//Output:int

while(n!=0){

r=m mod n;

m=n;

n=r;

}

return m;

欧几里得算法其实用到了后面学的Decrease-and-Conquer思想,要求gcd(m,n)的值,将其减少规模,变成求gcd(n,m mod n)的值。

质数问题

想要判断一个数n是否为质数,很容易想到的是我们只需遍历所有比n小的大于1的数i,判断n mod i==0,复杂度是O(n),其中一个优化是只需判断[2,n^(1/2)]内的数即可,复杂度是O(n^1/2)。

但是如果输入是n,我们要输出一个包含所有小于n的质数的集合呢?

一种简单的解决思路是,对每个小于n的质数运用一次质数检验算法:

//Input:Integer n ≥ 2

//Output:List of primes less than or equal to n

for i=2 to n do

if(prime(i)) add i to list;

这个算法的复杂度是多少呢?prime(i)的复杂度是O(n^1/2),外面还有一层循环,于是这个算法的复杂度是O(n^3/2)。

其实有另外的解决办法。

Sieve of Eratosthenes

Input: Integer n ≥ 2

Output: List of primes less than or equal to n

for p ← 2 to n do A[p] ← p

for p ← 2 to n do

if A[p] != 0 //p hasn’t been previously eliminated from the list

j ← p* p

while j ≤ n do

A[j] ← 0 //mark element as eliminated

j ← j + p

//copy the remaining elements of A to array L of the primes i ← 0 for p ← 2 to n do

if A[p] != 0

L[i] ← A[p]

i ← i+1 return L

这个算法在做一件什么事情呢?从2开始,将每个质数的倍数标记,剩下的就全都是质数了。 此算法的复杂度分析在这里就不做了。

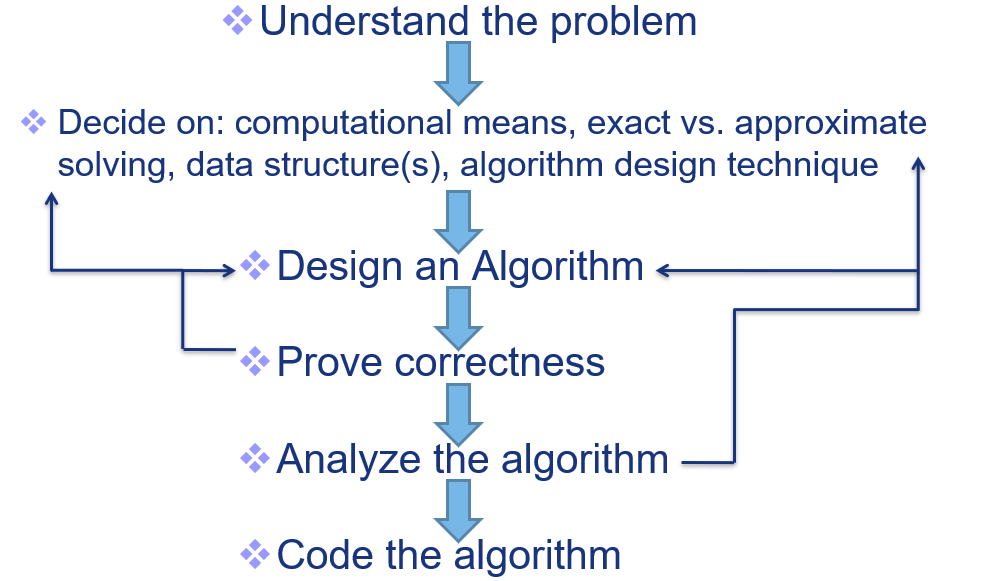

Algorithm design and analysis process

Fundamentals of the Analysis of Algorithm Efficiency

A problem or algorithm with at most polynomial time complexity is considered tractable (or feasible). P is the set of all tractable problems. A problem or algorithm that has complexity greater than exponential is considered intractable (or infeasible). Note that n^1,000,000 is technically tractable, but really very hard. n^(log log log n) is technically intractable, but easy. Such cases are rare though.

Brute Force

Brute-force Sorting

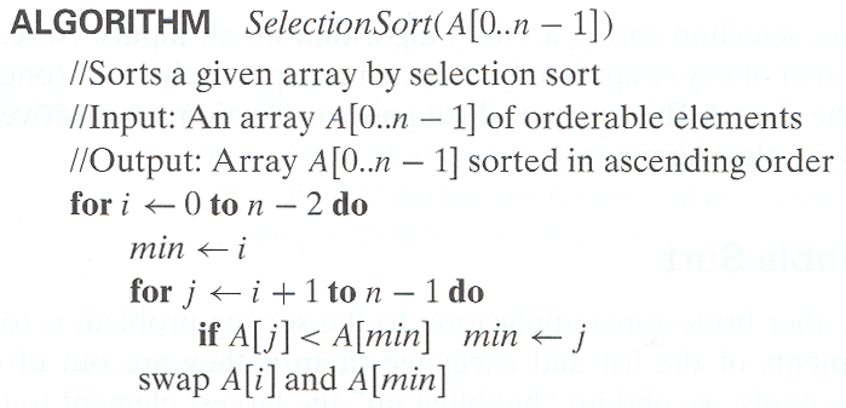

selection sort:

time complexity:Θ(n^2)

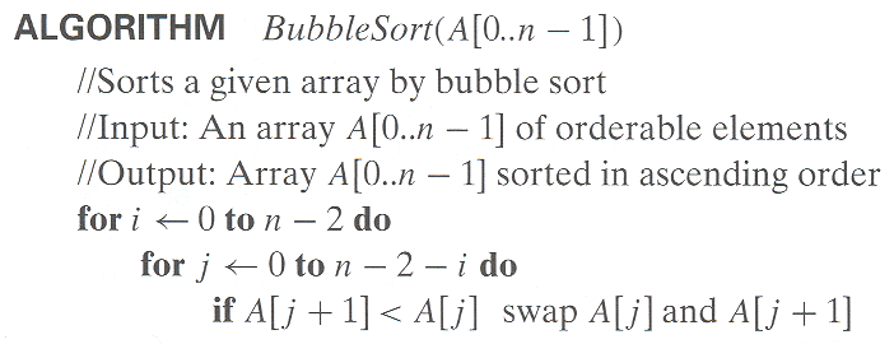

bubble sort:

time complexity:Θ(n^2)

Divide-and-Conquer

主定理

主定理的使用请看这篇博文:《Introduction to Algorithm》notes:Three methods for solving recurrences。

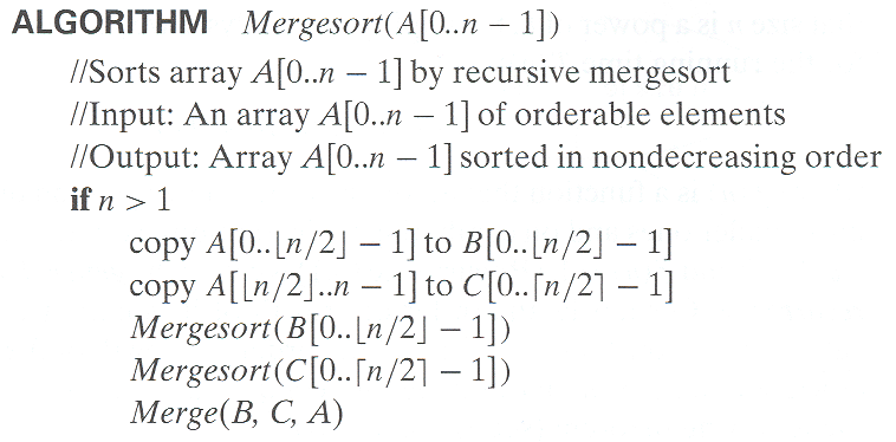

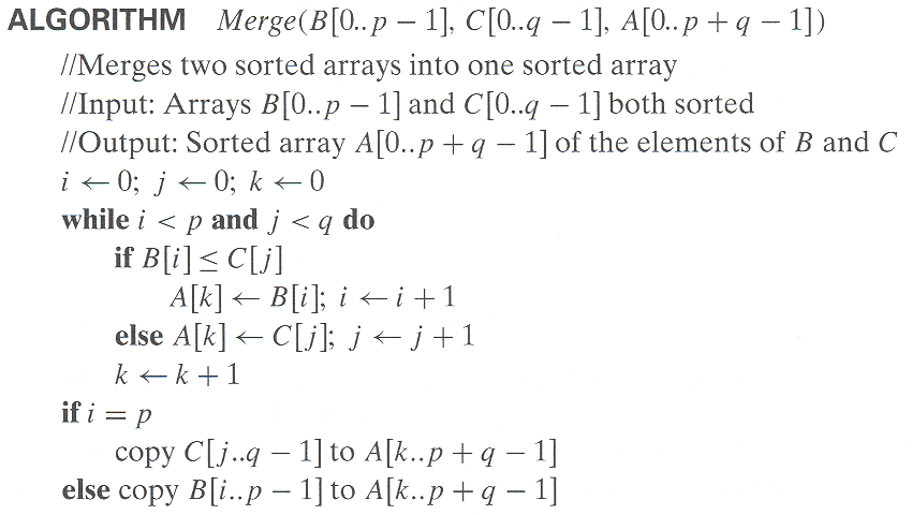

MergeSort

Mergesort无论在最差平均还是最好情况下,time complexity都是Θ(nlogn)。

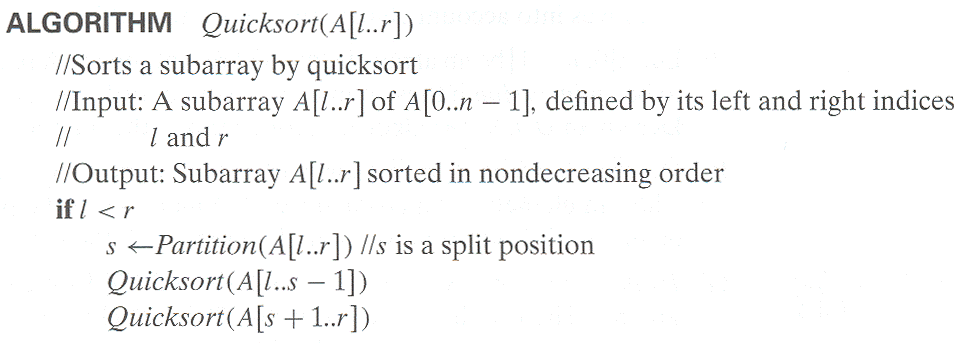

Quicksort

Best case: split in the middle — Θ(n log n)

Worst case: sorted array! — Θ(n2)

Average case: random arrays — Θ(n log n)

Decrease-and-Conquer

Types of Decrease and Conquer

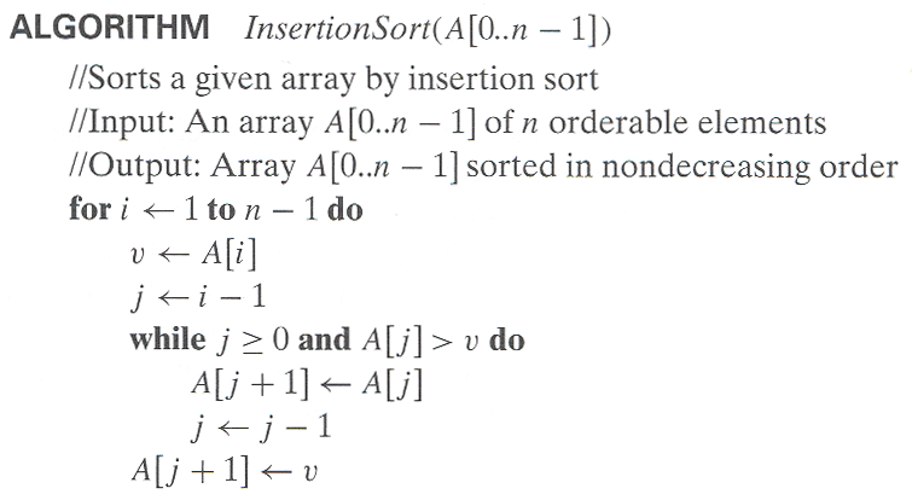

- Decrease by a constant (usually by 1): insertion sort/graph traversal algorithms (DFS and BFS)/topological sorting/algorithms for generating permutations, subsets

- Decrease by a constant factor (usually by half):binary search and bisection method/exponentiation by squaring/multiplication à la russe

- Variable-size decrease:Euclid’s algorithm/selection by partition/Nim-like games

InsertionSort

Time efficiency

Cworst(n) = n(n-1)/2∈Θ(n2)

Cavg(n) ≈ n2/4∈Θ(n2)

Cbest(n) = n - 1∈Θ(n) (also fast on almost sorted arrays)

Space efficiency: in-place

Stability: yes

Best elementary sorting algorithm overall

Binary insertion sort

Transform-and-Conquer

AVL树

熟悉好rotation操作就Ok了。 Search and insertion are O(log n) Deletion is more complicated but is also O(log n)

HeapSort

Both worst-case and average-case efficiency: (nlogn)

In-place: yes

Stability: no (e.g., 1 1)